Predicting the Success of Startup Ventures

An incredible number of factors influence the success or failure of ventures. Some of these factors are within a venture's control, and others are not. This work analyzes these factors and the complex interactions between them to predict venture success.

Theses

Master's Thesis

Lexical & Language Modeling

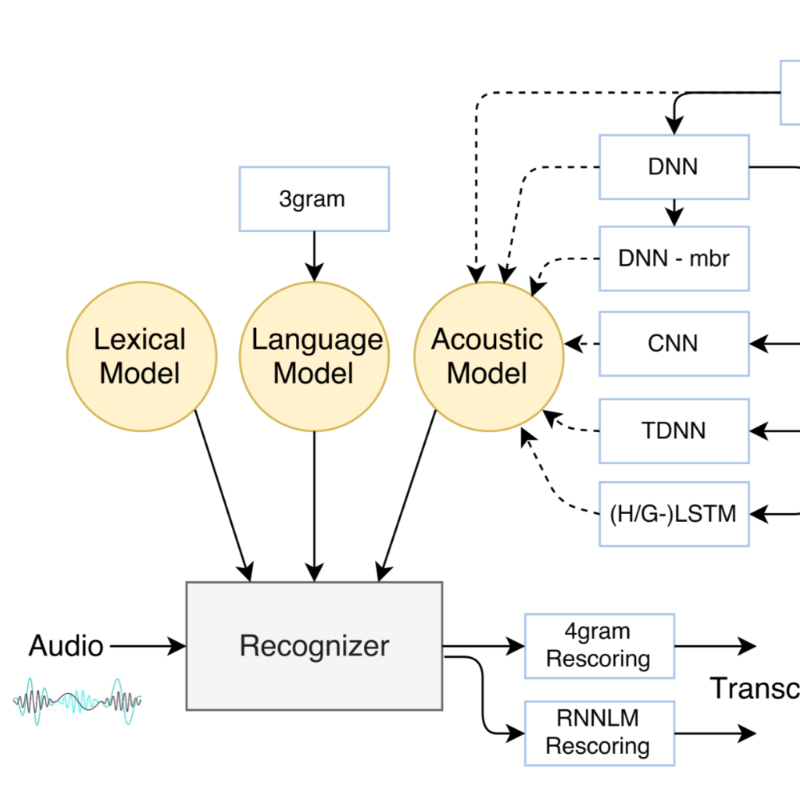

Arabic is a morphologically rich language which rarely displays diacritics. These two features of the language pose challenges when building Automatic Speech Recognition (ASR) systems.

Ph.D. Thesis

Forget-me-not

Dementia comes second only to spinal cord injuries in terms of its debilitating effects; from memory-loss to physical disability. The standard approach to evaluate cognitive conditions are neuropsychological exams, which are conducted via in-person interviews to measure memory, thinking, language, and motor skills. Work is on-going to determine biomarkers of cognitive impairment, yet one modality that has been relatively less explored is speech.

Modeling Health with Human Signals

A sequence-based neural network model that detects depression from audio and text transcripts of interviews.

Winner of Best Paper Award at Interspeech 2018.

An automated system that evaluates speech and language features from audio recordings of neuropsychological exams.

In collaboration with the Framingham Heart Study.

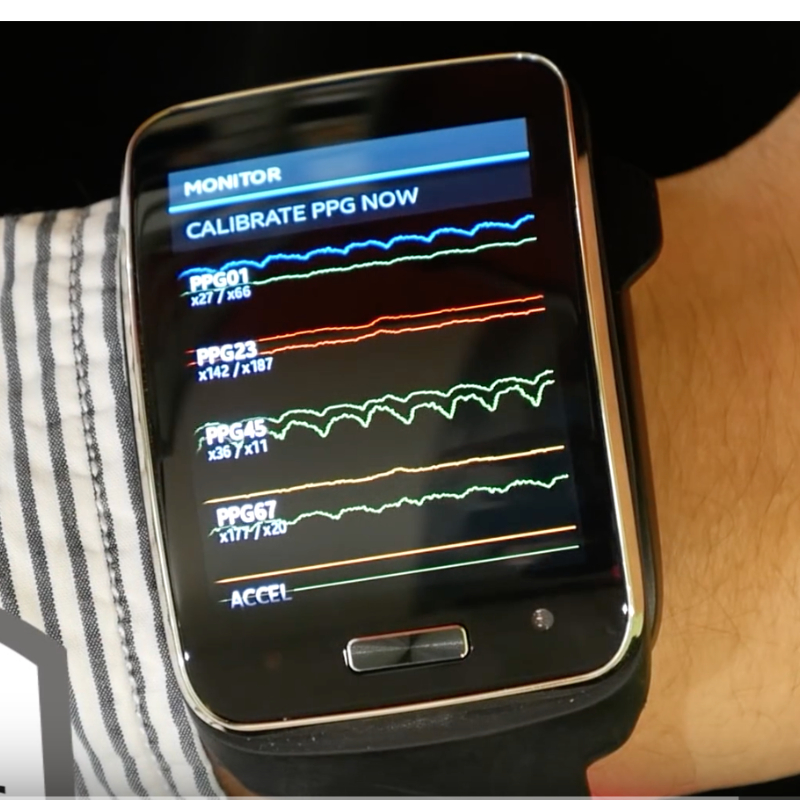

A wearable A.I. that can interpret the mood of a conversation from speech, language, movement, and physiology.

In collaboration with Samsung Corporation.

Cardiac arrest impacts over half a million people a year. Even if successfully resuscitated, patients can enter an indefinite coma. Predicting if patients will wake up from coma can prevent premature withdraw of care.

Making Sense of Medical Data

A personalized medication dosing algorithm that is robust to missing data, and 29% more accurate than intensive care staff.

An open-source tool for the transcription of paper-based medical spreadsheets.

Decisions about patient care, especially in the ICU, are complicated. Doctors aren't machines, they also have "gut feelings" about their patients.

Translating Speech into Text

While audio is relatively easy to record, it remains a challenge to automatically diarize (who spoke when?), decode (what did they say?), and assess a subject’s cognitive health.

Arabic speech-to-text system developed using 10 years of news data, and exploring a range of neural network topologies.

Investigate lexical modeling, covering diacritics, pronunciation rules, and acoustic based pronunciation modeling.